‘The only thing we learn from the past is how little we’ve learned from our mistakes’.

Geog Wilhem Friedrich Hegel

‘Those who cannot remember the past are condemned to repeat it’

George Santayana

‘Blessed are the forgetful: for they get the better even of their blunders’.

Friedrich Nietzsche

Inside the black box of classroom practice…

Formative assessment helps pupils understand how to improve

but requires teachers to focus on what works best and change their habits of practice.

i. What AfL is: getting pupils to understand how to improve.

Before I started to teach, my Head of Department said, ‘if you read one thing, read ‘Inside the Black Box’. So I did. AfL seemed to revolve around getting and providing effective feedback (1999). Research that summarised 250 assessment articles over a decade argued that AfL ‘could do more to improve educational outcomes than almost any other investment in education’ (Black et al 2003, 2). International evidence corroborated this: a synthesis of 250 studies concluded that ‘the gains in achievement are amongst the largest ever reported for educational interventions’ (Marzano 2007, 13). Studies from Natriello (1987) Crooks (1988) Kluger & DeNisi (1996) and Nyquist (2003) backed it up. John Hattie’s research that I summarise here, enthroned feedback as the most effective teaching strategy, bar none. Clearly, all this stuff on questioning, peer- and self-assessment, and feedback, could be powerful stuff.

AfL in a nutshell.

ii. What AFL became and why: hijacked, hoop-jumping gimmickry

Few education concepts have been more distorted in a shorter time span than formative assessment: teachers falling prey to gimmicks, schools mandating unhelpful AfL policies, and government policy confusing AfL with national levels. Lolly pop sticks, coloured party cups, red-amber-green traffic lights five times a lesson, thumbs-up-or-down, starred self-confidence post-its, scribbled emoticons for end-of-lesson feelings, strange and unhelpful acronyms like WALT & WILF all became a kind of reductio ad absurdum. Many senior leadership teams then enforced the letter of the AfL law rather than the spirit of it: school-mandated lesson plans, observation rubrics and progress checks 3 times a lesson and endless mini-plenaries; objective sharing in rigid but often counterproductive formats required across all subjects like ‘by the end of the lesson, students will be able to…’; peer assessment on levels that often resulted in comments like: ‘5a because he tried hard and wrote neat’; marking in green pen rather than red to avoid damaging students’ self-esteem; and posters with tiny, illegible, incomprehensible but displayed level descriptors. Prescriptive but flashy AfL techniques like waving around mini-whiteboards became the OFSTED-enforced orthodoxy, and inspectors became obsessed that pupils could say what level they were on.

The last government then bureaucratised AfL into a National Strategy, which ‘practically ignored the process and pedagogical essence of AfL and its underpinning principles’. Critics described it as a ‘woeful waste’ dominated by an alignment with ‘Assessing Pupils’ Progress’ and summative assessment’ (Swaffield 2009, 13): a ‘misinterpretation of AfL as a mechanism for advancing students up a prescribed ladder’ (Swaffield 2009, 13). Politicians hijacked Afl and ossified it into sub-levelling and labelling students.

Dylan Wiliam himself recognises these unintended consequences as what he calls ‘policy diffraction;’ or, more graphically, ‘scoring a spectacular own goal’. He gives the examples of one school that showed him ‘AfL lessons where pupils know what level they’re on, and when asked how to get to the next one, they say ‘listen in class and do my homework’; and one teacher who had ‘lost the plot’ and combined ‘lolly pop sticks with bunny ears that pupils held up to show they were listening.’ He put these own goals down to government ‘looking for a quick win’ and being ‘in a rush’.

Perhaps, though, such spectacular own goals are being scored and the ideas misunderstood because they weren’t clearly communicated in the first place. One of Wiliam’s four top ideas was, after all, the formative use of summative tests. Confusion between AfL and APP is more understandable in that light. His 2011 book, Embedded Formative Assessment, looks set to repeat the mistakes of his past 1998 and 2001 pamphlets. A laundry list of 53 techniques includes coloured cups, popsicle sticks, red/green disks, traffic lights, WALT, WILF, two stars and a wish, ask the audience and phone a friend amongst others. A decade on, it seems we have learned little from such unthinking, hoop-jumping blunders.

iii. How to make formative assessment work: examples, questions and feedback.

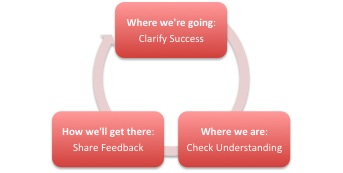

For a classroom teacher, teaching an average of over 200 pupils more than once a week, checking and improving the subject understanding of every individual pupil is a huge challenge. Wiliam clarifies it in a helpful grid, and I simplify it below as a cycle. I then summarise the top five (out of 53!) highest-impact ideas for applying formative assessment that work to help pupils understand how to improve in my experience: exemplars, hinge questions, exit tickets, checklists, and numbered questions – dovetailing with Engelman’s advice of examples, questions, practice and feedback.

Where we’re going: Clarify Success

1. Snap & share exemplars

Comparing multiple student samples of essays, paragraphs or sentences that exemplify excellent work or different levels of a success criteria rubric. Discussing strengths and areas for improvement helps them understand what excellence looks like. An easy way of getting sample work is to ‘snap and share’ from students’ books with the camera on your smartphone to save you or them typing it all up.

Where we are: Check Understanding

Just as a pilot guides a plane toward its destination by taking constant readings and making careful adjustments in response to wind, air currents and weather, so a teacher within and across lessons must check whether and to what extent students understand what they need for the destination or end-of-unit assessment. Both these ideas are ‘all –student response mechanisms.’

To reach your destination, adjust direction.

2. Ask & adapt to hinge questions

Asking a key question at the right moment in a lesson allows you to visibly see among your whole class who gets it and who doesn’t, then adapt afterwards. Multiple-choice questions work well for this as you can scan the class instantaneously then probe and follow up misunderstanding. Wiliam suggests the options of using mini-whiteboards or ABCD cards, though the content of questions matters most.

3. Pose & use exit tickets

At the end of lessons, and for longer responses, ask a single question designed to assess whether they’ve learned what you’ve tried to teach them by getting them to apply it, and get all students to write an answer in their books or on cards you’ll take in.

How we’ll get there: Share Teacher-, Self- & Peer-Feedback

To be effective, feedback must provide a recipe for future action. Otherwise, feedback is more like the scene in the rearview mirror than the windshield. Feedback only functions formatively if it is used by the learner in improving performance. Wiliam says he often asks teachers whether they think that their students spend as much time using their feedback as it took to give it. Typically, he says, fewer than 1 percent of teachers believe this to be the case. His advice is not to provide students with feedback unless you allow time, in class, to use the feedback to improve their work. Feedback should be focused, as less is often more, focused on the rubric of success criteria, and it should be more work for the recipient than the donor.

Feedback as a windshield not a rearview mirror

4. Tick & target with a preflight checklist

Peer and self-assessment works well through a simple checklist of requirements and success criteria. You get students to tick off criteria they or their peer have met, and write targets for criteria as yet unmet.

5. Mark & require responses to numbered question options

Before you mark 30 books, decide on six or so questions that you might want students to respond to next lesson when they get their books back. As you read, write a number or two for questions that student will have to answer for ten minutes next lesson. In the lesson, display the questions on the board, and get the students to copy their questions and respond to them. It takes you just a minute to read the work and write two numbers, and takes them ten minutes to respond. For examples, and instead of numbers, you could use icons.

iv. How we can improve teaching: focused habit change

Wiliam says all the research shows the best way of improving student achievement is by improving teaching quality: the most effective teachers help their students learn at four times the rate of the least effective teachers. But he argues that just improving the quality of entrants by raising the threshold for entry whilst getting rid of underperforming teachers with rigorous deselection takes too long. We need to help improve the quality of teachers already working in our schools – the ‘love the one you’re with strategy.’

Old habits die hard.

Wiliam then uses a Weightwatchers analogy. Everyone knows that the route to a healthy body is to exercise more and eat less. But Weightwatchers realise they’re not in the knowledge-giving business; they’re in the habit-changing business. Similarly, most teachers know that questioning and feedback are the ways to improve learning; but old habits die hard. If you’re serious about improving teaching, he says, you’d better get in the habit-changing business.

If you don’t change what teachers do in classrooms, students don’t benefit: that’s why so much structural and curriculum change has made such little impact. But no one has cracked this idea of habit change. Mixing his metaphors slightly, returning to the pilot analogy, he thinks the reason why teachers resist change is it’s scarily like doing engine change in flight.

His solution is Teacher Learning Communities, with the principles of choice, small steps and flexibility as to which of his 53 techniques teachers opt for. Monthly 2-hour sessions between 8-10 teachers help sustain dedicated practice and undo old habits.

Whether Dylan Wiliam’s TLCs will get the better of the AfL blunders, time will tell. In the meantime, as teachers we can first and foremost ask whether our own use of formative assessment is genuinely helping pupils understand how to improve in our subjects.

Black, P. and Wiliam, D. 2001 Inside the Black Box: Raising Standards Through Classroom Assessment Paul Black and Dylan Wiliam King’ s College London School of Education

Marzano, R. J. 2007 The Art and Science of Teaching: A Comprehensive Framework For Effective Instruction. Alexandria, Va.: ASCD.

Swaffield, S., 2009. The misrepresentation of Assessment for Learning – and the woeful waste of a wonderful opportunity. Unpublished paper’, at the 2009 AAIA National Conference (Association for Achievement and Improvement through Assessment) Bournemouth, 16 – 18 September, 2009.

Wiliam, D. 2011, Embedded Formative Assessment.

A quite brilliant post – thank you. I have read a lot of accounts of AfL and this drives right through the industry-making rubbish and hits the nail on the head. Lots of practical tips. I will be sharing and saving this post!

Reblogged this on huntingenglish and commented:

Do read this excellent account of AfL that cuts through the rubbish that has tainted the concept since its inception. Brilliantly clear, well researched and really practical.

An excellent post that will definitely support teachers practical understanding of AFL.

This was very useful, thank you; I am looking for ways to get students to act on feedback and will be feeding back your ideas at my next department meeting and also when we set up our TLC.

Excellent! There’s so much rubbish in teaching, how refreshing to hear someone cut to the chase! Can I add that having a great TA who buys into the formative feedback cycle helps. I’ve got a system now where I do all the ‘stop-check’ part in class (I teach eight year olds) but I also pounce on the books straight away, checking the hinge points for success. Anyone (usually no more than two or three because I stopped and scouted enough) who got lost spends five minutes the same day, 1:1 with my TA ensuring they learnt what I intended. As a teacher running the show, I’d never be able to do that myself, but she’s so on board, she’s knows exactly what to do, and takes them off to a corner during our daily reading sessions, or at the start of PE or something and gets them back on track. You don’t need gimmicks, you need common sense!

An excellent post. It really does summarise a big topic very well. I think that you’re maybe a little too harsh on Wiliam’s 53 techniques. Seen as strategies that you might want to experiment with in order to extend the ways that you use formative assessment, they are fine. Of course the problem comes when managers try to prescribe them and I assume that you’re suggesting that Wiliam should have foreseen exactly this response. This sort of prescription is nonsense and reminds me of a recent twitter debate on no-hands / lollipop sticks. I personally love mini whiteboards; a strategy that is often described as gimmicky on twitter. They work so well in my maths and physics classes but I wouldn’t insist on all maths and physics teachers using them, and certainly not a teacher of literature. The principals matter but the exact form does not. Therefore, I say thank you for distilling formative assessment down to these principals.

By the time Paul Black and I concluded our review of research on classroom formative assessment in 1998, we had become convinced that attention to these aspects of practice had the potential to increase student achievement substantially—and perhaps more than any other kinds of changes to classrooms. However, we also knew, from our previous work, that helping teachers develop their practice in the directions the research indicated might be most effective was far more than just telling teachers about the findings. For example, the research suggested that task-involving feedback was likely to be far more effective than ego-involving feedback, but when teachers asked us whether a particular kind of feedback was task-involving or ego-involving, we realized that we did not know—it depended on the relationship between student and teacher. That is why Kluger and DeNisi (1996), in their monumental review of every single published research study on feedback from 1905 to 1995, concluded that whether feedback improved performance, and if so by how much, were questions that were simply not worth asking. Feedback might increase achievement in the short term, at the expense of deeper learning in the longer term. Rather, they suggested, feedback research should focus on the kinds of responses the feedback cued in learners—put simply, it is impossible to understand feedback independent of the relationship between teacher and student. That is why we stated, in “Inside the black box” that each teacher would need to find their own way of implementing these ideas in ways that were relevant to their own classrooms.

We could, of course, like most educational researchers, have left it there, suggesting that teachers should work out how to apply these research findings to their own contexts, but this seemed to us to be unfair, because we were leaving the very hardest part of all—taking into account the effects of context—to the teachers. That is why we took the rather unusual step of sharing with teachers specific techniques, such as lollipop sticks, coloured cups, and mini-whiteboards, with teachers. The danger of such an approach, as Joe Kirby amply demonstrates in his blog, is that the adoption is tokenistic, focused on the gimmicks, with no real improvement in either classroom practice, or student achievement. However, it seemed to us then (and still seems to me now) that this was better than just giving teachers the bland research findings and leaving them on their own to find out how to apply the principles. And actually, calling on students to answer questions at random, or making students share answers on mini-white boards, is likely to increase student engagement even if teachers have no idea why they are doing it. Sometimes, as Millard Fuller, the founder of Habitat for Humanity, said, in certain matters, its easier to get people to act their way into a new way of thinking than it is to get them to think their way into a new way of acting.

Which brings me to “Embedded formative assessment”. It does list 54 practical techniques that teachers can use to develop their practice, but as the final section of the book makes clear, the idea is that each teacher works on one or two, and certainly no more than three, of these techniques at any one time. It is also important to realize that the techniques are not mine. If “Embedding formative assessment” contributes anything, it relates techniques used by teachers to research literature so that when teachers adapt these techniques for use in their own classrooms (as they must—no technique works identically in every classroom) they can adapt them in ways that are likely to be faithful to the original research, and thus retain the benefit. This seems to me the most useful role for educational research. Educational research can never lead practice—great teaching is produced by teacher creativity—but what the research can do is to identify some avenues as more fruitful than others, and also make sense of the practice of effective teachers, in particular by identifying which aspects of practice are likely to be essential to success, and which are idiosyncrasies of individual teachers.

It may, as Joe Kirby suggests, have been a “blunder” to list techniques, and of course I was aware of the possible danger of tokenistic adoption by school leaders trying to do their best in culture of accountability that takes no account of the differences in children’s readiness to learn. But it seems to me rather odd not to share teachers’ good ideas with other professionals because they might get misused.

Gimmicks are gimmicks when used superficially. I’ve seen some awfully pedestrian uses of lolly sticks and so on but also some excellent applications where the colour coding has been used to prompt questioning or a change in direction in the lesson. To simply dismiss them is like saying that interactive whiteboards or computers are gimmicks. It’s how you use them that matters. Struggling, for example, to break the habits of hands flying up in the said, I started insisting that all children had to put their hands up, but holding a red or green card – I know/have something to say or I don’t know/have anything to say. On its own, this s a gimmick, but when used to pair up children for sharing those responses, or to realise that whatever it is I’m trying to get across is simply not working, then it becomes a useful strategy. I found Joe’s blog really useful and interesting but I think we need to be careful not to rubbish some very thoughtful and engaging ideas.

If the movement for research based evidential backing for classroom interventions and teaching strategies leads to a greater number of teachers considering their own practices more carefully and challenging school leaders trying to impose one-size-fits-all AfL quick fixes, then surely it can only be an improvement on the wait for an INSET day to see what else needs to be incorporated into lesson approach that has been prevalent in many schools for far too long

Absolutely spot on – a lot of ideas picked up by teachers and SLT whilst losing sight of the original point.

There’s one thing I’d add:

4. Tick & target with a preflight checklist

Peer and self-assessment works well through a simple checklist of requirements and success criteria. You get students to tick off criteria they or their peer have met, and write targets for criteria as yet unmet.

Success criteria as checklists are great for processes. For things like writing though, checklists are a little misleading. For example, when teaching children to write persuasively, you’d show them persuasive writing and draw out what it is that makes them persuasive. They’ll see that each piece of writing has different persuasive devices and that merely having ‘all the persuasive devices’ does not necessarily make it effective.

For writing, the question is not ‘Which of the criteria have you used?’ but ‘Where have you done it most effectively?’ I’ve blogged about it here:

https://thisismyclassroom.wordpress.com/2012/08/29/i-dont-know-what-to-write/

https://thisismyclassroom.wordpress.com/2013/03/17/writers-toolkit-discussion-2/

Certainly what you say is the priority – no more gimmicks.

Pingback: How is assessment shackling schools? | Pragmatic Education

Pingback: What can we learn from Dylan Wiliam and AfL? | johncallmejonah

Hi Joe,

Really interesting by the idea of both hinge questions and exit slips – am wondering how to encorporate it into my own teaching but unsure as to how to phrase such questions for English especially if the lesson focusses on a particular skill (eg: PEEL paragraphs; varying sentence structures for effect; writing in depth and detail). It seems to me that for this you need students to write quite extensive responses that demonstrate the skill rather than concrete understanding of facts/ideas. It seems that these are more suited to subjects like Maths and Science. Are there any English specific examples you could share?

Thanks in advance

Louisa

Pingback: Why isn’t our education system working? | Pragmatic Education

Pingback: Edssential » What can we learn from Dylan Wiliam and AFL

Pingback: Edssential » AfL: Tight but loose….

Pingback: A guide to this blog | Pragmatic Education

Pingback: Part 2 (of 2) Great Learning: What are the ‘important things’ that make learning ‘GREAT’? | From the Sandpit....

Pingback: Why does sharing learning intentions matter? | Improving Teaching

Pingback: Ofsted reports: most common areas for improvement | marymyattsblog

Pingback: A summary of ideas on this blog | Pragmatic Education

Pingback: Which cognitive traps do we fall into? | Pragmatic Education

Pingback: Rethinking my practice: what is the best advice for our student teachers? | teachertweaks

Pingback: The 5 Minute Assessment Review | The view from the maths bunker

Pingback: Books, bloggers & metablogs: The Blogosphere in 2013 | Pragmatic Education

Pingback: A guide to this blog | Pragmatic Education

Pingback: Kirby Footy Tipping 2009 | AFL Footy Tipping

Pingback: What Sir Ken Got Wrong | Pragmatic Education | Magnitudes of dissonance

Pingback: Kirby Footy Tipping | AFL Footy Tipping

Pingback: Kirby Footy Tipping 2011 | AFL Footy Tipping

Pingback: Afl Tipping Poster Cards | AFL Footy Tipping

Pingback: Assessment | PGCEPhysicalEducation

Jo. Super blog. If, and that’s a big if, we accept that @learningspy’s idea that we cannot see learning (in a lesson) what are exit tickets etc trying to check? If it is not learning then it needs to be whatever it is that is likely to lead to learning. I wonder if you might give some thought to this and blog a super answer as to what we might look for which means learning is likely to happen eventually.

It depends on how you define ‘learning.’ By example, if a person can solve a two-step equation in one lesson, exceptionally well, does it two dozen times with a variety of equations, but then cannot do it a week later, or a year later – did they learn it?

If a person at the end of one period of instruction understands that they have to start each new sentence with a capital letter, but then in the next piece of writing they hand in more than half of the sentences don’t start with capitals, did they learn that lesson?

I would argue that, institutionally, we have a pallid definition of what it is to ‘learn’ something.

What Didau’s doing is ascribing to the idea that ‘learning is a change in the start of long-term memory; if nothing has been changed, nothing has been learnt.’

http://www.cogtech.usc.edu/publications/kirschner_Sweller_Clark.pdf

Now there is obviously a continuum here. The person who could solve two-step equations in one lesson, but not later, obviously has experience that another person who has never even seen a two-equation does not have. And from the second example, well perhaps previously they weren’t starting any sentences with a capital letter, so now they’re just in need of practice and feedback to get it up to 100%.

This is well explained by Bjork’s model of each memory having an associated retrieval and storage strength. The storage strength is the thing we are developing over time, whereas it is the retrieval strength only that we can directly measure. It is also for this reason that we have no intuitive sense of storage strength; the only thing we ever observe is retrieval.

Retrieval strength at the end of a lesson might be very high, since the idea was encountered just moments ago. The person learning is successful in whatever related task they are given (solve an equation, write a paragraph.) But, without storage strength to ‘prop up’ that retrieval strength it quickly falls away:

https://www.phase-6.com/system/galleries/pics/Vokabeltrainer/forgetting_curve_en.png

In this model, ‘learning’ is equated with ‘storage strength.’ Since, according to Bjork, storage strength never diminishes with time (where retrieval strength does), at its apex something is ‘learnt’ if and only if storage strength has become *so* high that retrieval strength will effectively always be high enough to facilitate immediate recall.

On to your question about exit tickets then. Exit tickets can check, to some extent, whether an idea has been understood in the moment. Can the person solve an equation? Without further prompting, do they get that each of those four sentences needs a capital letter at the start?

What exit tickets *cannot* check is whether that same person can still recall that next year, or a week later, or tomorrow even.

They do the job of assessing you passed ‘stage 1’ if you will: ‘Form correct memory.’

After that, it is the job of curriculum design to ensure that further ‘learning’ takes place i.e. the development of that memory’s storage strength over time.

Does all that make sense?

That makes complete sense. Thanks for the detailed reply. Let me continue. Some children will not be able to do well on the hinge questions nor on the exit ticket but might still show they have learned, as shown by a later test which will be a check of the change in long term memory – ie learned in the best definition we have. That is supposition on my part. Do you think there is evidence that can be gleaned in the lesson to indicate that learning will most probably happen? What are the features of those learners that can be seen during the lesson? What are the features of those who do not learn, even though they can respond short term?

If they didn’t get it in the exit ticket, then I’d say they probably won’t get it later on without intervention.

But what is ‘intervention’?

Rather than the teacher speaking to them, or another lesson, or pull-out class or whatever, ‘intervention’ in this context could just be a friend talking to them about it. Maybe it could even be just their spending a bit of time thinking about it in their own head?

I failed French completely. By Year 11 I was a hostile student: I rejected the subject and the teacher (despite being *desperate* to learn it while in Year 5, and so excited about the subject when entering Year 7). So naturally I left with a D grade.

Fast forward six months and I’m walking home from college on my own, left to my own thoughts, and for some reason the myriad chanting from the lessons came back to me. As I was turning those thoughts over, for the first time I realised, out of nowhere: “Oh! Avoir and etre, they’re just *verbs*, ‘to have’ and ‘to be’!”

I’m sure you can imagine how many times my teacher had probably told me that over 5 years. Maybe I’d have scribbled something down to that effect, somewhere, I don’t know… but I certainly never felt like I’d learnt that until that moment on my way home.

So to sum up, if they don’t show they’ve learnt something in the exit ticket, will they still come to learn it later? Maybe, but the odds are low I think, so it’s on us to act.

Can we know if someone might ‘get it’ later, when they didn’t in the exit ticket? I don’t think so. I feel that *something* has to take place between the ticket and the later assessment for that to happen, and if we weren’t the agents of that action, how could we know?

Can we determine who might ‘get it’ later, when they didn’t in the exit ticket, versus someone who still won’t? I’d suspect it will be those kids who are most proactive, and most thoughtful, who stand the greatest chance.

Pingback: If we cannot see learning in a lesson, what are Exit Tickets for? | …to the real.

Pingback: socrative – composing Qs | Learning and Teaching at Abbot Beyne

Reblogged this on learning@larkmead and commented:

Great blog on AfL

Pingback: Golden needles in a haystack: Assessment CPD trove #4 | Joe Kirby

Pingback: Articles | Joe Kirby